HPE have just released SSMC 3.4, it looks like a small update, but it brings a big change and many new features. Therefore, I would say the right Version should be 4.0.

This guest post is brought to you by Armin Kerl, if you fancy trying you hand at blogging check out our guest posting opportunities.

What’s New in SSMC 3.4?

The key new features in SSMC are:

- Appliance Deployment – Now a Linux based appliance VM

- New Setup Sizing Guidelines – Small, large or medium deployments available depending on the number of 3PAR systems you are managing

- InfoSight Integration – Tighter integration with InfoSight

- Performance Analytics – Includes simplified reporting indicating if system is overstretched or performing well

- Replication – Enhancements to management

Let us get a deeper look at the new features:

Download SSMC

To get the latest 3.4 version of SSMC you can download it from the HPE Software Depot. Once downloaded you will find an ISO with the name format HPESSMC-.iso. When you mount the ISO you will find the appliance image file in there HPE_SSMC__VMware_Image plus the migration tool (HPE_SSMC__Migration_Tool) which will allow you to migrate your current settings to the new appliance.

SSMC Appliance

In the Past, we installed the SSMC as a service on an existing Windows or Linux Host, now it is a standalone Linux based appliance. Today supported operating systems to run the appliance on are VMware ESXi version 6.0, 6.5, 6.7 or Microsoft Hyper-V Server 2012 or 2016. The 3PAR OS can be 3.2.1, 3.2.2 or 3.3.1.

SSMC Deployment

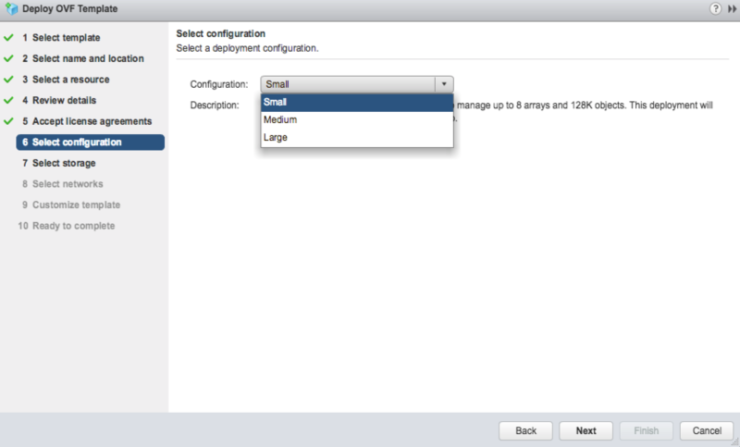

During the Deployment you can choose the initial settings like IP address and you can choose between three Sizing Options:

Small

Use the Small deployment option to manage up to 8 arrays and 128K objects. This deployment needs 4 vCPUs, 16GB memory and min 500GB Disk Space.

Medium

With the Medium deployment option, you can manage up to 16 arrays and 256K objects. This deployment needs 8 vCPUs and 32GB memory and min 500GB Disk Space.

Large

The large deployment option allows management of up to 32 arrays and 500K objects. This deployment needs 16 vCPUs and 32GB memory and min 500GB Disk Space.

SSMC Migration

If you are already running SSMC 3.2 or 3.3, you can use the migration tool contained in the SSMC ISO, install it on the same host where the older SSMC is installed and migrate the configuration to the new appliance.

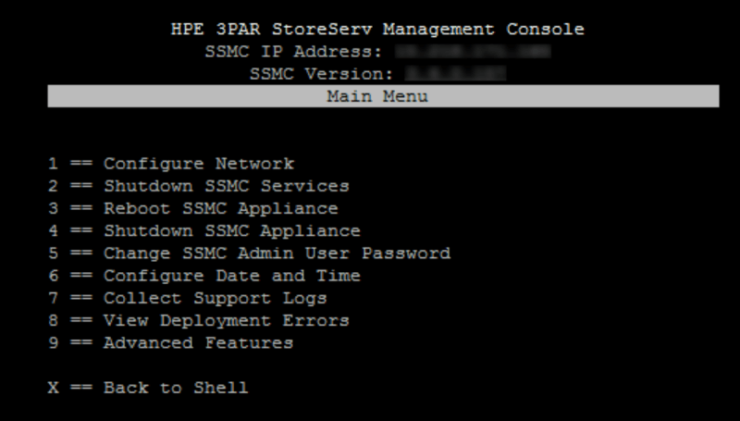

The Text-based User Interface (TUI)

Once the SSMC appliance has been deployed using the initial setup wizard you make any further configuration changes through the The Text-based User Interface (TUI). To access the TUI you will open the console of the SSMC appliance and logon with the username ssmcadmin. You can find the password for the ssmcadmin account on page 55 of the Admin Guide.

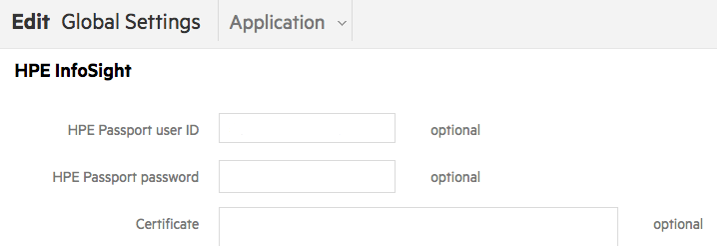

InfoSight Integration

If configured, The SSMC is now able to pull information from HPE InfoSight back into the SSMC Console.This brings you advanced system performance and reporting analytics into The SSMC. You have to enable it in SSMC “Global Settings > Application” and enable InfoSight, as described in a previously in Configuring 3PAR with InfoSight.

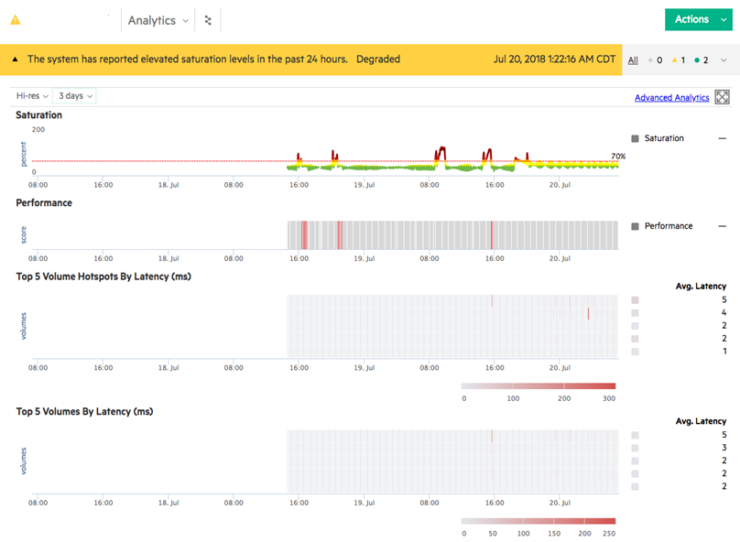

Performance Analytics

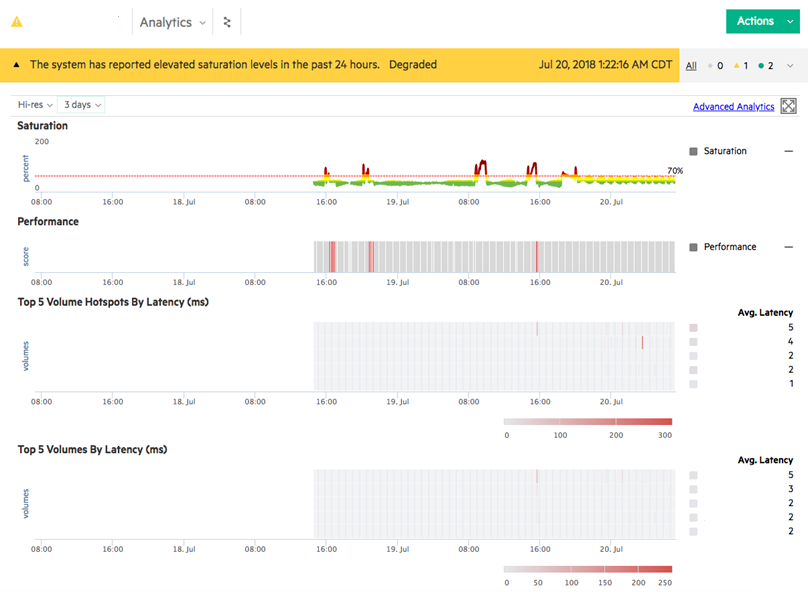

In the past, we were able to get a lot of Performance Information from 3PAR Storage – how many IOPS, MB/s, latency, for disks, volumes, groups, HBA etc. but you needed specialist skills to interpret the values. A common question was are these performance values good or bad.

Now InfoSight and SSMC calculate saturation levels and headroom availability with respect to throughput and shows it on easy to interpret graphs. This can be done in Real-time, because analytics from InfoSight are now built inside SSMC. New Statistical models detect hotspots, saturation and a lot more. Thanks to the connection with InfoSight, it is also showing “How is my 3PAR performing against other similar Systems in the whole World”.

To see this, add the new Panels to your Dashboard or use the new Dropdown Menu “Analytics” on the System Page.

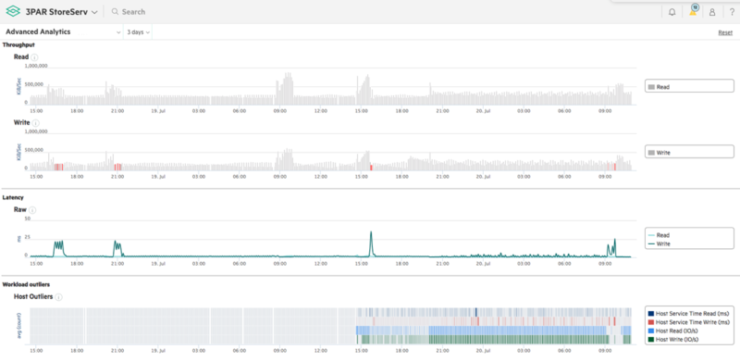

There is also a new “Advanced Analytics” category in the System Reporter.

Initially all the analytics features will only work with all-flash (SSD only) arrays.

Replication

There are also some enhancement for Synchronous Data & File Persona Replication Configuration and management.

SSMC Documentation

The following new manuals are available for SSMC 3.4

Still missing all the guides from HPE. Installation/user guides 🙁

Links for documentation on SSMC 3.4 added to post

Hi, I have deployed OVF, but cant set IP address, dont have DHCP. And cant find SSMC 3.4 any documents. On console of VM is asking for ssmc login and password which are unknown for me. Do you have idea? Thank you

You should be able to set the IP address during the initial deployment. Use the TUI to update any necessary settings

I have found solution for setting IP address during ovf deployment, still missing support documents for 4.4 version. Thank you

Docs now added to the post

Do you have a link to the migration tool? I can’t find it on HPE’s site.

Nevermind, it was on the ISO with 3.4

There are some files in iSO file (link given by Richard), one of them is migration tool

Yes the migration tool is part of the main SSMC 3.4 ISO

Hello!

Do you know appliance login/password?

Yes. user = ssmcadmin, see the admin guide page 55 for the password

Hi & thank you for the information on version 3.4! What are the default credentials for ssmc login after deploying the OVF? Can’t find any admin/user guide for version 3.4 so far… Thanks!

Post updated see the TUI section for authentication details.

We are currently evaluating the SSMC 3.4 but now are faced to a problem, which is no-go for using the SSMC 3.4 in production: There is no possibility to enter DNS servers!? Is there a trick to do so? We found a “resolv.conf” file, but not able to edit this file with the correct values. Does someone have the “root” password from the Appliance?

Regards,

Klaus

You can set a DNS server during the initial deployment during step 9. If you missed this and it’s already deployed use the TUI

Where is all the documentation?

Documentation links added

How can you set the IP adress of the appliance, it’s not asking this during the OVF deployment? Once booted it can’t be accessed unless you have the username/password

Should ask during deployment, if you missed it use the TUI

What is the default login for a new appliance?

Nevermind, I just used the web access as usual

Hi, can i get a copy? when i’m trying to download there is an error message that my order as expired and i can’t download.

You can get it from the 3PAR software depot – https://h20392.www2.hpe.com/portal/swdepot/displayProductsList.do?category=3PAR

I must change the IP address, i need the root password

Read the TUI section of the post for details

what is the ssmc 3.4 default username and password ?

Username = ssmcadmin. Password in the admin guide detailed in post

Can anyone suggest how to make this resilient. We have two sites, if the primary site SSMC goes down how should we manage the storage on the secondary site and keep all the customisations from the primary SSMC. Does it support SRM replication. There is nothing about this in the guides.

If you have a third site you could deploy SSMC here

Excellent document Richard!!!

Thanks for the feedback!

Couldn’t find a post on virtual domains. Which is why i chose to comment here.

We are implementing virtual domains. But need to share an esx cluster between no domain and a domain. I read about the concept of domain sets. However, not sure how to share an existing esx cluster host in no domain specified and a domain.

Would like some guidance

Domains are just a logical concept so you can have different groups with different privileges on the same system. For example administrators A can only manage customer A and administrator B can only manage customer B. Just create the domain and add the objects (CPG, host and remote copy group) you want those administrators to manage.

Anyone has tried to reduce the number of vcpus to 2 and memory to 8?

I only have 2 8200 in peer persistance mode.

Thanks

Just did a greenfield 3.4 deployment into vSphere 6.5 using the HTML5 client. The OVF deployment process ignored many of my inputs so I had to break into the appliance (bootloader menu then init=/bin/sh) just to set the ssmcadmin password to a known value. Ugh.

Good news is that it’s running happily now.