I recently took on the support of a customer with a Peer Persistence setup. I had lots of questions when I first started looking into it and I see lots of questions on the forums around this area so I wanted to cover them off in this post.

What is Peer Persistence?

Peer Persistence is a 3PAR feature that enables your chosen flavour of hypervisor, either Hyper-V or vSphere to act as a metro cluster. A metro cluster is a geographically dispersed cluster that enables VM’s to be migrated without interruption from one location to the next with zero down time. This transparent movement of VM’s across data centres, allows load balancing, planned maintenance and can form part an organisations high availability strategy.

Peer Persistence can also be used with Windows failover clustering to enable a metro cluster for services such as SQL server on physical servers.

What are the building blocks for Peer Persistence?

The first thing you are going to need is two 3PAR systems with Remote Copy, Peer Persistence is effectively an add-on to Remote Copy and cannot exist without it. Remote Copy must be in synchronous mode and so there are some requirements around latency. The max round-trip latency between the systems must be 2.6ms or less, this rises to 5ms or less with 3PAR OS 3.2.2

As this is effectively a cluster setup a quorum is required, which HPE provide in the form of a witness VM deployed from OVF. This witness VM acts as the arbitrator to the cluster to verify which systems are available and if automatic fail over to the 2nd site should be initiated.

The other requirements are:

- The 3PAR OS must be a minimum 3.2.1 or newer for Hyper-V. I would recommend at least 3.2.1 MU3 since this included a fix which removed the need to rescan disks on hosts after a fail over. 3.1.2 MU2 or newer for VMware

- The replicated volumes must have the same WWN on both 3PAR systems. If you create a new volume and add it to Remote Copy this will happen automatically.

- You will need a stretched fabric that will allow hosts access to both systems

- Hosts need to be zoned to the 3PAR systems at both sites

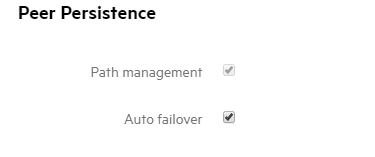

- When you create the remote copy groups you must enable both auto_failover and path_management polices to allow for automated failover

- FC, iSCSI, or FCoE protocols are supported for host connectivity. RCFC is recommended for the remote copy link.

Further requirements specific to hypervisor are:

Hyper-V

- Windows hosts must be set to a persona of 15

- For non-disruptive failover Hyper-V hosts must be 2008 R2 or 2012 R2

VMware

- Windows hosts must be set to a persona of 11

- For non-disruptive failover ESXi hosts must be ESXi 5.x or newer

- No storage DRS in automatic mode

- Recommended to configure datastore heart beating to a total of 4 to allow 2 at each site

- Set the HA admission policy to allow all the required workloads from the other site to run in the event of a fail over

The picture bit

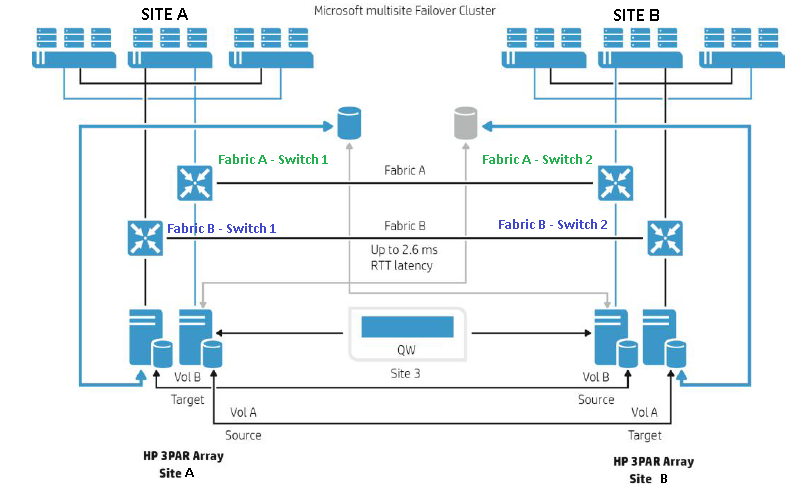

I have robbed the picture above from HPE Peer Persistence documentation, it has more lines on it than the London underground, but let me try and explain. There are two geographically dispersed data centres site A and B. Both sites contain 3 hypervisor hosts shown at the top of the picture and a 3PAR shown at the bottom. The data centres are then linked by a stretched fabric so the zoning information is shared across the sites, synchronous Remote Copy will also occur across the link. Each host is zoned to 3PAR systems at both sites.

At the top of the picture is a blue cylinder at site A and a grey one at site B this represents that each volume is presented twice, once at each site. The volume has the same WWN and by using ALUA one of the volume will be marked as writeable (blue cylinder), whilst the other is visible to the host but marked non writeable (grey cylinder). In the event of a switchover the volume from site A has its paths are marked as standby at site A and whilst the volume at site B has its paths marked as active.

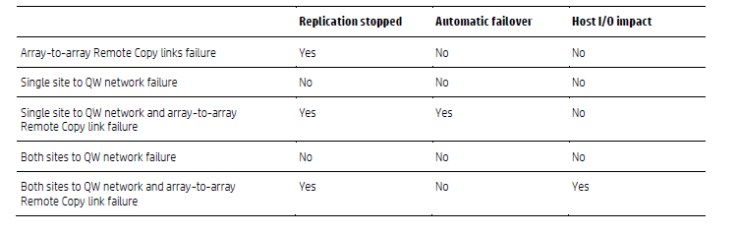

The quorum witness shown at the bottom of the picture as QW is a VM which must sit at a third site not site A or B and must not rely on the storage it is monitoring. It is deployed using an OVF template and is available in Hyper-V and VMware versions, I will cover its deployment in another post. The job of the quorum witness is to act as an independent arbitrator and decide if an automatic failover from one site to another should occur. The witness VM essentially checks two things the status of the remote copy link and the availability of the 3PAR systems. When it detects a change in one of these conditions the action taken is displayed in the following table borrowed from the HPE Peer Persistence documentation. The key thing to take away from the table is that an automatic failover across sites will only ever occur if the witness detects that Remote Copy has stopped and one of the 3PAR systems cannot be contact

Enough chat, let’s implement this thing

To summarise everything we have talked about so far I am going to list the high level steps to create a 3PAR Peer Persistence setup. I am going to use a Hyper-V setup as an example but the steps for another type of failover cluster and VMware are very similar

Infrastructure Steps

- I will assume you have synchronous replication up and running and meet the latency requirements as described above

- Verify you have the Peer Persistence licence

- Setup a stretched fabric across both sites

- If necessary upgrade your 3PAR OS to the 3PAR OS version listed in the requirements section

- Deploy the witness VM. Check out my full deploying the 3PAR quorum witness VM guide for assistance on this

Host Steps

- Configure your zoning so all hosts are zoned to both 3PAR systems

- Check and if necessary set the correct host persona

- On the source system create the remote copy group which contains all the volumes requiring replication. From SSMC Main menu, Remote Copy Group, create.

- When creating the remote copy group the WWN of the source and target volume need to be identical. To ensure this is the case when you create the remote copy group ensure that that Remote copy volumes create automatically is selected

- Also when creating the remote copy group ensure the two tick boxes in the Peer Persistence section are checked for path management and auto failover

- For each 3PAR export the volumes to the hosts in the cluster. i.e. the source and destination volumes should both be exported to the cluster. Before doing the export ensure the host are already added to a cluster to avoid corruption

- You may need to rescan the disks, in Disk manager once they are exported

Management

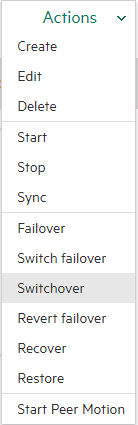

To change which 3PAR system is actively serving the data and which is standby. Select Remote copy groups, highlight the group you wish to change where it is active, and choose Switchover

To stay in touch with more 3PAR news and tips connect with me on LinkedIn and Twitter.

Further Reading

Implementing vSphere Metro Storage Cluster using HPE 3PAR Peer Persistence

Patrick Terlisten has written a nice blog series on Peer Persistence start here

Thanks 3ParDude, good timing as we are in the process of deploying 8400’s with Peer Persistence. Looking forward to the witness install blog post, I’ve got that task coming up soon.

Hi 3pardude!

It is also required to use VMW_PSP_RR (Round-Robin) as PSP Plugin for VMware Metro Cluster configurations using 3PAR Storage (http://www.vmware.com/resources/compatibility/detail.php?deviceCategory=san&productid=39468&deviceCategory=san&details=1&releases=275&keyword=3par&isSVA=0&page=2&display_interval=10&sortColumn=Partner&sortOrder=Asc). You can see here: https://vnote42.wordpress.com/2016/04/12/create-psp-rule-for-hpe-3par/ how to set the right policy for 3PAR storage using PowerCLI or esxcli.

Regards

Wolfgang

Hi 3pardude,

Can I implement peer persistence in a single site?

Our idea is to have data high availability using two 3par in the same datacenter, and installing the quorum in a second site. The latency between sites is higher than 5ms

Thanks

Hi

This would kind of defeat the purpose of Peer Persistence which protects against a site failure. That’s why the witness is in a 3rd site, to detect when one of the other sites fails and initiate a fail over. In this scenario if the site failed you would lose everything anyway and there would be nowhere to fail over to.

I know and I agree with you but, our customers are looking for a high availability solution for the data and our second site does not qualify because the latency.

We still have plans for a DR site, but now we are planning just HA.

Do you think that Peer Persistence could be considered for this scenario?

Thanks

This isn’t limited to Hyper-V, but can work with Windows Cluster in general. For example: we’ve got a SQL cluster using this principle. I’m geussing file sharing would also be feasible.

You make a good point Peer Persistence does work with Microsoft Clustering in general and so can be used with applications like File Servers, SQL Server, Exchange and SharePoint.

When the Source and Backup Volumes are being presented from each of the two participating Arrays to the Same Host, Shoud they be exported under the Same LUN ID from each array or does it not matter?

This is effectively going to happen automatically, remember remote copy groups are built from virtual volume groups. If you have an existing remote copy group with 3 volumes and the LUN ID of the last volume is 3 when you create a 4th volume it will receive a LUN ID of 4. If you also choose the option to automatically create a volume on the target it is going to add it to the Volume group which is again going to choose the next increment, 4.

Hi Richard,

In the document below, it cites the peer persistence support for the xenserver.

Though nowhere in the world is this support cited. Do you have the info if supported peer persistence in the xenserver?

http://h20566.www2.hpe.com/hpsc/doc/public/display?sp4ts.oid=5044394&docId=emr_na-c02663749&docLocale=en_US

I have not setup Peer Persistence with Zen server, but as you say this document says its supported. Have you checked SPOCK and the other documents referenced?

Hi Richard, I’m unable to find the HPE doc on this. It is available on Scribd. Are you able to attach the PDF if you have already? Thanks, Bruce

Hey Bruce. Sure here is the Peer Persistence PDF for VMware – https://www.hpe.com/h20195/V2/getpdf.aspx/4AA4-7734ENW.pdf

What is the best method to do switchover when you know one site is going down for maintenance?

Just do a normal switchover which will be online. Then stop replication, auto failover and the quorum witness

Hi Richard

I am in the process of implementing HyperV Failover Cluster with 3PAR failover using Peer Persistence over two sites. I have been having issues with HPE 3PAR Cluster Extensions CLX, but going from your above article, you did not mention the need to install CLX. Can this be done without the CLX ? The CLX seems to be the problem bit, in that I get corruption during migration from one sight to the other. Would be good to hear if you did this without the HPE Cluster Extensions. Many thanks

Hi

CLX and Peer Persistence are different tools. Peer Persistence allows you to create a metro cluster and protect against site and array failure. CLX is setup at the cluster level and directly controls the cluster it is installed on. Take a look at both technologies and see which one suits your needs best.

Hi 3ParDude. Is it possible to implement 3par peer persistence with a LAN between metropolitan sites, or only with SAN/Fiber channel networks?

Yes iSCSI is also possible