In this 3PAR 101 series so far we have looked at 3PAR fundamentals and the systems unique approach to RAID, in Part 2 we looked into Common Provisioning Groups (CPGs) Now we get onto the stage where we can provision some storage to hosts by using virtual volumes and vluns!

Virtual volumes (VV’s) are HPE’s terminology for what would most commonly be called a LUN, a LUN which has been presented or exported to a host is called a VLUN. Virtual volumes draw their space from CPG’s and come in two varieties Fully Provisioned Virtual Volumes (FPVV) and Thin Provisioned VV (TPVV). A FPVV uses all the allocated space upfront, so if a 100GB VV is created straight away 100GB of space will be used from the CPG. With a TPVV only the space that is demanded is used, so if a 100GB VV is created and only 50GB of that space is used only 50GB of space will be demanded from the CPG.

A thin provisioning licence is required to use TPVV and assuming this is in place TPVV are the default type of VV created. Today if you purchase a system you get the full licence bundle included and so this becomes less of a concern.

Another type of Virtual Volume was introduced with the 3PAR all flash systems. The Thin Deduplicated Virtual Volume (TDVV) as the name suggested this was a volume that was both deduped and thin provioned. 3PAR OS 3.3.1 depreciated the TDVV volume type and made dedupe a property of a volume that could just be turned on. 3.3.1 also introduced the capability to enable compression on volumes.

Creating a VV and VLUN SSMC

Enough theory let’s get on and create a VV, first in the 3PAR SSMC:

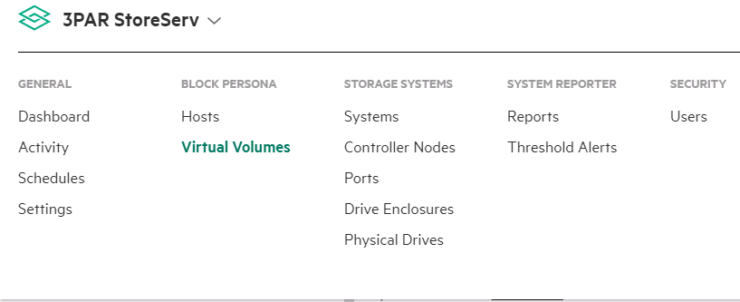

1 Open SSMC and from the main menu select Virtual Volumes

2 Click the green Create Virtual Volume button on the top left of the screen

3 What appears next is the simple Virtual Volume creation screen. The only information you have to supply is:

- Name – the name you wish to call the Virtual Volume

- System – If you are connected to more than one system, chose the system you want to create the volume on

- Size – How large the volume needs to be

Further fields you can change if you wish:

- Provisioning – choose from thick or thin provisioning

- CPG – Select a different CPG for storing the virtual volume in

4 If you want to create an additional volume you are done. But if you need to set any of the following, choose Edit additional settings

- Copy CPG – The CPG you want metadata and snapshots to be stored in

- Number of volumes – if you want to create multiple volumes at the same time

- Volume sets – Add the volume to a volume set

- Comments – Add any comments you wish

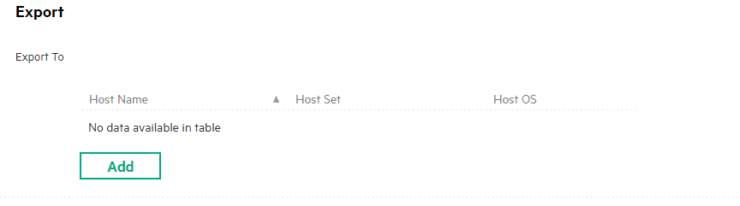

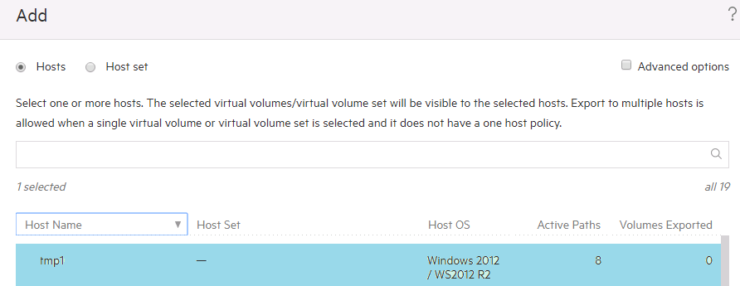

5 Next we present the newly created volume to a host. Still in the create virtual volume wizard select add

In the box that appears choose to export the volume to a host as below, or select to export it to a group of hosts using a host set.

To enable dedupe and compression on the volume follow the 3PAR Dedupe + Compression Deep Dive

Creating a VV – CLI

This section looks at how we create a volume and then export it to a host using the command line.

createvv -tpvv -pol zero_detect NL_R6 VirtualVolume2 100G

Lets break down the CLI options a little

- createvv – core command

- -tpvv make thin provisioned volume

- -pol zero_detect scan for zeros on incoming writes

- CPG name – in my case NL_R6

- VV name – in my case VirtualVolume2

- Size of volume – in my case 100GB

Creating a Vlun – CLI

createvlun VirtualVolume2 auto host1

- createvlun – core command

- VV name – in my case VirtualVolume2

- Lun ID – auto in this case

- Hostname – host1

If you only use the new management tools for 3PAR that it your done, fineto. This blog has over 150+ 3PAR articles check out a selection of them here. If you missed any of the 3PAR 101 series catch up on them:

3PAR 101 – Part 3 – Virtual Volumes and Vlun’s

Creating a VV – 3par management Console

If you still use the 3PAR management console then read on:

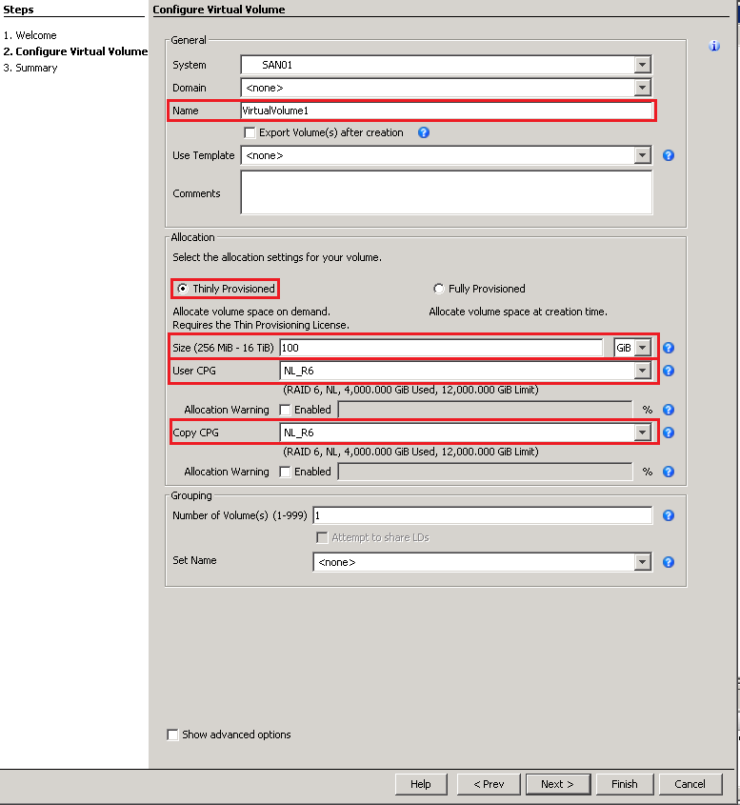

1 In the management pane select Provisioning and then from the common actions pane select Create Virtual Volume

2 Next you will see a welcome screen which has a lot of useful info on creating VV’s, if you do not want to see this again click the skip this step tick box and click next

3 The basic information you will need to complete when creating a VV is highlighted in the screenshot below. Thin provisioned will be selected by default, enter the name and size and then the CPG you want the VV to sit in. Remember the CPG you choose will determine the performance and availability level of the volume. The copy CPG will only be needed if you use snapshots

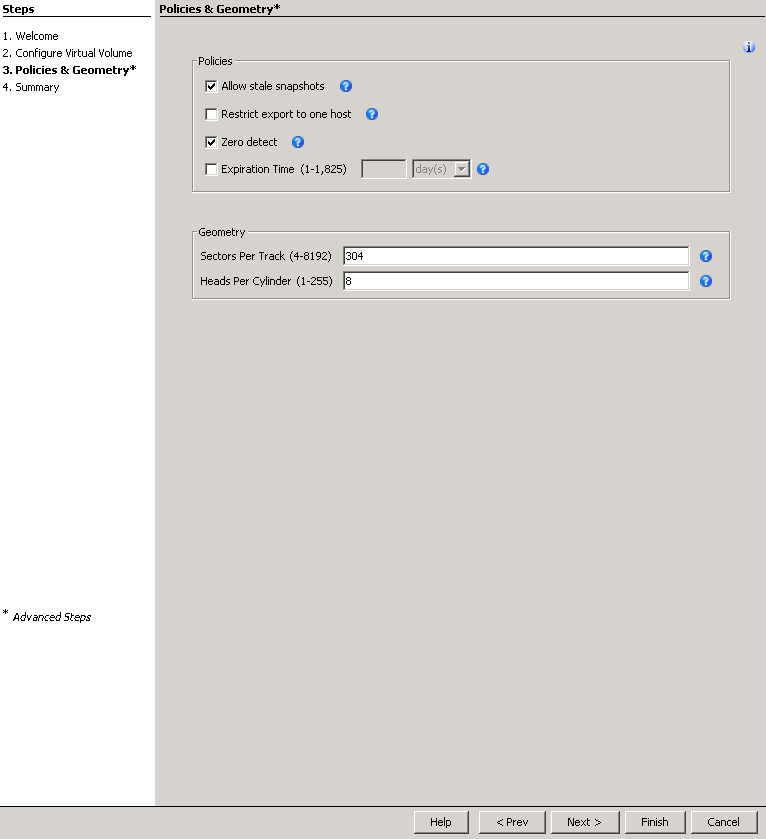

4 The screenshot below shows what you will see if you tick the advanced options checkbox. Generally you can leave all this to deafult and will not need to select advanced options

5 The final screen shows a summary of the sections you have made, if you are happy just click finish here

Creating a Vlun – 3par management console

Next we need to export (provision) the virtual volume to the host

1 In the management pane select Provisioning and then from the common actions pane select Export Volume

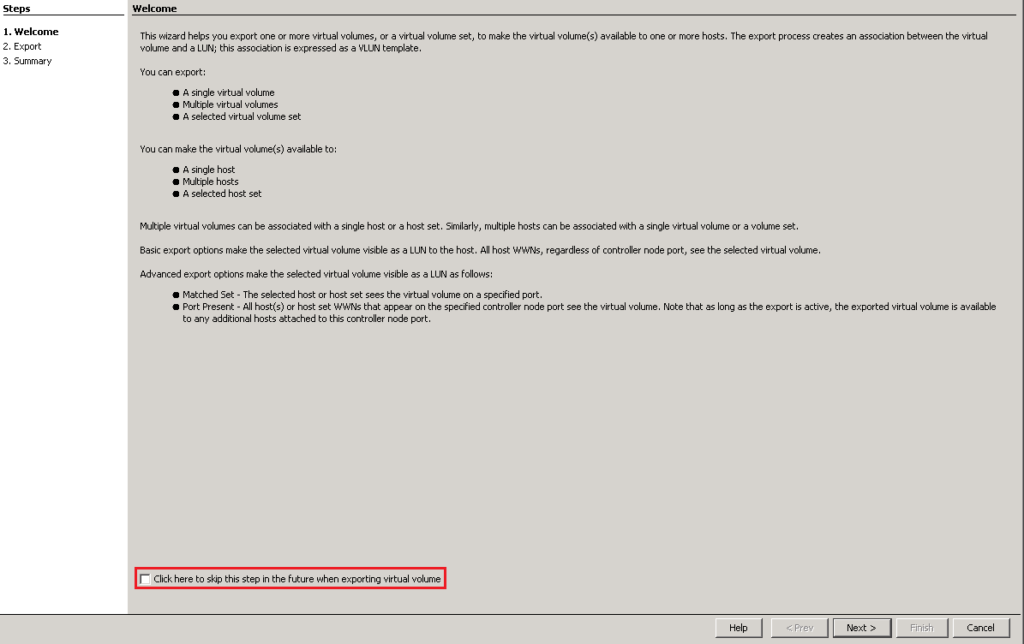

2 Next you will see a welcome screen which has a lot of useful info on creating VLUN’s, if you do not want to see this again click the skip this step tick box and click next

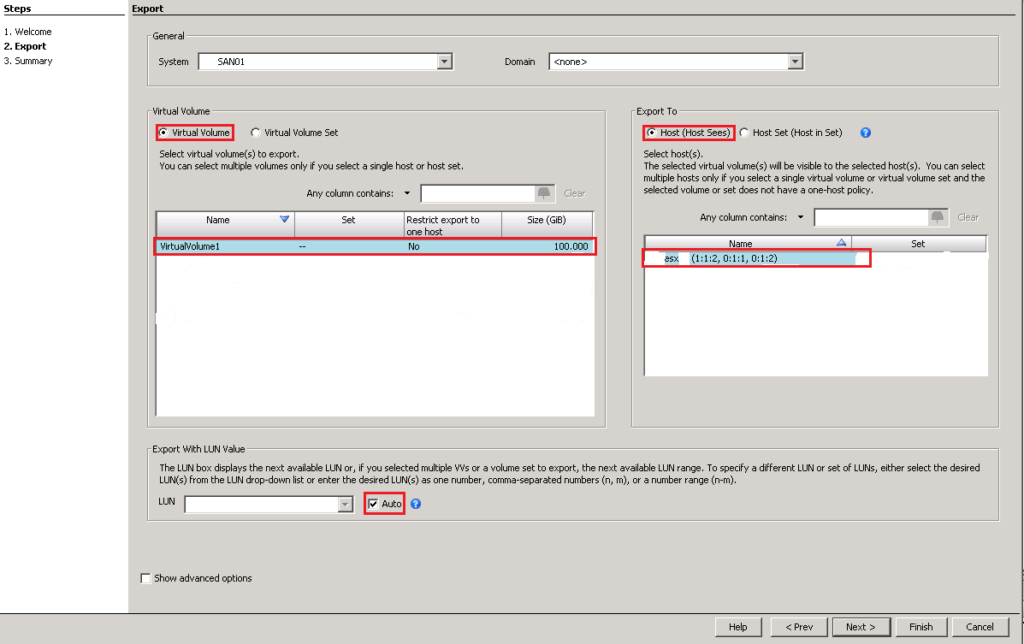

3 On the left hand side of the screen you need to select the name of the volume to export, on the right hand side you need to choose the host to export to. By ticking auto for export with LUN values it will automatically choose the LUN ID for you. When your happy with your selection click next

4 The final screen shows a summary of the sections you have made, if you are happy just click finish here

If you have missed it, check out parts One (3PAR architecture) and Two (CPG’s) of this 3PAR beginner guide series.

To stay in touch with more 3PAR news and tips connect with me on LinkedIn and Twitter.

Further reading

Dear, Thanks for your great and simple article

i have a question about the availability of the whole 3par system

how many physical disks can 3par bear their failure in the same time without loosing or affecting the data ?

for older arrays like EVA , we lost some data due to failure of many disks in the same time which RAID could not rebuild the data

i know that the “spare” concept here is virtualized not a physical spare “using spare chunklets”

but what is the maximum number of simultaneous failed physical disks the 3PAR would loose data if it goes beyond that ?

Hi welcome to the blog.

The simple answer is it depends. First of all it will depend on the RAID level you have chosen, which disks fail and the sparing level you have set for you system. You can see what setting you have for sparring by running showsys -param form the CLI and looking for SparingAlgorithm.

Any CPG is made up from logical disks. As we know from my post logical disks are chunklets arrange as rows of a RAID set. So if for example your CPG has a setting of RAID 5 3+1, your CPG will be built from many RAID 5 3+1 sets. Any RAID 5 set can tolerate a single disk failure, so how many disks can fail will depend on which RAID set the disk fails in. For example if you have a disk failure, and then another failure but the second failure is from a different RAID set then no data is lost. However if you have two failures from the same RAID set you will have data loss.

This is assuming that system hadn’t completed rebuilding the data to spare chunklets. If the system completes sparing the counter is effectively reset. In summary like any storage system its best not to second guess it and get any failed disk replaced straight away.

Hi,

regarding to the spare chunklets, is it possible that 3par run out of spare chunklets ?

does 3par able to rebuild spare chunklets from or to different RAID level ?

Hi a number of chunklets are dedicated as spares when the system is first setup. If spares are then required the system first looks for local spare chunklets, then local free chunklets. It will then look for remote spare chunklets and finally remote free chunklets. So as you can see not only spare but free chunklets can be used and so as long as there is spare disk space the system will not run out. Local chunklets means the logical disk they sit on is owned by the same node which owned the failed chunklet.

Hi,

What do you mean by local and remote chunklets ?

regards,

Each logical disk has a preferred owner i.e. a preferred node. If the spare chunklets are part of a logical disk that has the same preferred owner they are local. If they have a different owner they are remote.

Hey 3PAR Dude! Loving the blog, now that I’m on KT for an account on which I’ll have to manage 3PAR. Just a quick one, on an existing Volume, is increasing capacity (Via GUI) as simple as hitting Edit and changing the Volume’s Size from there?

Hi glad you are enjoying the blog, yes that is one way to do it.

Question: Is this correct? In creating a 1 tb lun: The steps are 1. create cpg 2. create virtual volume – link this to cpg and server; check export after creation and when finished everything looks great. This is sitting on a Red Hat 6.8 server. Next question: On the server, would one have to mount it in the system after the creation in 3par? What if after creation the server does not list it in the file system?

Almost, when you create a volume you must choose which CPG to create it in, hence it is already linked to the CPG. Then you can export it to a sever. Once you have exported it you should just see it. Use showvlun to make sure everything looks OK and check your zoning.

Hi 3PAR Dude, This blog is very useful Thank you for that. I have question Pl clarify. I have request to create 30+ VVol’s and map it to Cluster (2 windows). Is this step is correct in order ? 1) Create host sets 2) Create Hosts (2 here) 3) Add those 2 hosts to Host sets 4) Create VVol Set 5) Create Vvols 6) Add Vvols to VV Set 7) Map VVset to Host sets..

if the above steps correct, how the lun id selection would be in order, the lun id order based on VVol creation priority (like if we have 30 luns created the the first created luns will have the ID of 0) ?

Hi,

Suppose there is a host connected to node 2 and node 3. For a 80 GB lun suppose LDs are equeally distributed between to node0, node1,node2 and node3 by 28GB/node(21gb data+7gb parity). How the host will use other LDs from node0 and node1 because host is connected to node 2 and node 3 only? Please explain

We have 2 3par 7450s in our environment. We get virtually no dedupe.95% of our virtual volumes are TDVVs. The dedupe datastores consume roughly 40TB of storage on each array. Since we get no tangible space savings in regards to dedupe. Is it safe to say that we have 80TB of wasted capacity(40TB on each array used for the dedupe datastores)?

Hi Stan thanks for the question. If you are not getting a good dedupe ratio like you say there is little value in having the volumes as a TDVV. It may however be worth waiting until 3.3.1 comes out as it has a re-write of the deupe code. After upgrade to 3PAR OS 3.3.1 you will need to create a new CPG and then move the volumes using DO to the new CPG to take advantage of the updated dedupe. I covered the enhanced dedupe here https://d8tadude.com/2017/02/21/3par-dedupe-compression/.

Reach out to me on LinkedIn if you need to discuss further https://uk.linkedin.com/in/richardarnold2

Hi 3PAR Dude,

Thanks for your great article / blog!

I have a question about vvsets and vluns…

We have two vvsets “A” and “B”

“A” vvset – active vluns: 14-28

“B” vvset – active vluns: 29-44

I want to add a new vv to A vvset, but I got an error:

Unable to expand vv set A due conflict on lun 29, set X

Because “B” vvset reserve vlun 29, I cannot add it to vvset “A”…

Is it possible, to add this vv to this vvset? Maybe add new, free vluns?

Hi thanks for the comments. As you have overlapping LUN ID’s it will not allow you to create another Vlun and you are getting the error. This is the challenge with vvsets, to avoid it in the future try and leave a gap between your vvset LUN ID’s eg vvset 1 0-20, vvset 2 21-40. In this circumstance the easiest thing for you would be to start a new vvset and add the new volume to this.

Hi 3PAR Dude,

Help me out, when i am trying to export a virtual volume to a host while attaching the particular host it is showing “The selected host does not have active paths. The exported virtual volumes or virtual volume set will not be visible to the host”. And if am continuing with that my volume is not listed on esxi host.

Hi

Sounds like your host is not connected to the 3PAR correctly. Is it zoned in correctly?

You can see the paths for your hosts at the CLI with showhost -pathsum. I suspect this host has no paths. Once you resolve the path issue you will be able to present the LUN.

:]

Hello, Thanks for the posts. I just inherited 2 3pars and these posts help a lot. I do have a question. Can I have a mix of drive sizes in my 3par, and is there a minimum number of the same drive size I should have. Currently all drives are 900GB but replacement parts are 1.2TB so can I replace a dead 900GB with a new 1.2TB being the only 1.2TB in the array until another drive dies. By your description of chuncklets I think I could but I’m not totally sure,

Thanks,

Scott

Generally you need 4 of any new drive type, check quickspecs it will tell you the exact disk rules. Yes you can mix drive sizes in a CPG. Personally I don’t recommend it since when the smaller drives become full and you are just writing across the remaining large disks your stipe will be much smaller. In summary yes it’s possible, just harder to manage.

Your blog has saved me quite a bit. I am still a beginner and am trying to learn the ways of 3par. If I attach a cage with drives, am I able to create cpgs from just those drives and not have any spare chunklets from the system or admin cpg merging with them? All drives are FC device type. All in all, I would like to test these for future replacements of our systems currently in production.

The system will automatically reserve a proportion of the chunklets on the disks as spares.